|

4/11/2023 0 Comments Netmap pci

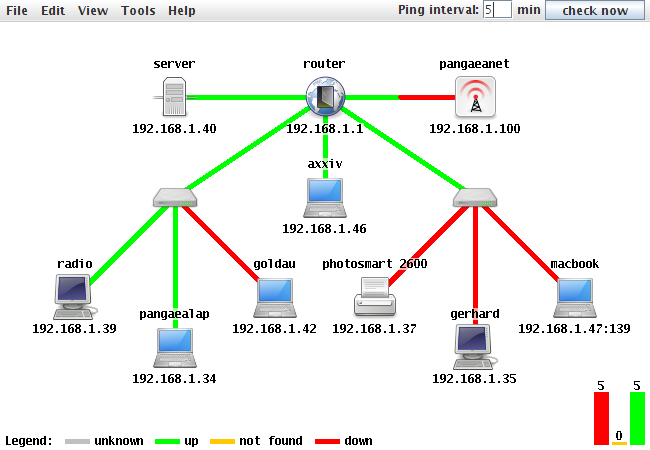

Ix0: PCI Express Bus: Speed 5.0GT/s Width x8 # ifconfig ix0 -txcsum -rxcsum -tso4 -tso6 -lro -txcsum6 -rxcsum6Īfter looking at iflib_netmap_timer_adjust() & iflib_netmap_txsync() in sys/net/iflib.c, Have you tried to disable all the offloads? In 11-stable the driver-specific netmap code does not program the offloads, whereas in CURRENT (and 12) the iflib callbacks actually program the offloads also in case of netmap. this lets me think that maybe the configuration is not 100% aligned between the two cases? Moreover, the last experiment is rather confusing, since you have actually a performance improvement. The 2.6 Mpps you get in the first comparison let me think that you may have accidentally left ethernet flow control enabled, maybe? However, the performance drop should not be so large as reported in your experiments. I would say some physiological performance drop is to be expected, due to the additional indirection introduced by iflib. IOW, no explicit netmap code stays within the drivers. This impacted netmap because netmap support for iflib drivers (intel ones, vmx, mgb, bnxt) is provided directly within the iflib core. What I can tell you for sure is that the difference is to be attributed to the conversion of Intel drivers (em, ix, ixl) to iflib.

Ixl0: Allocating 1 queues for PF LAN VSI 1 queues active Ixl0: Using MSIX interrupts with 2 vectors

Testing NIC Intel IX710, 1 queue configured Ixl0: Link is up, 40 Gbps Full Duplex, Requested FEC: None, Negotiated FEC: None, Autoneg: True, Flow Control: Noneĩ41.463329 main_thread 13.564 Mpps (13.741 Mpkts 6.511 Gbps in 1013001 usec) 16.04 avg_batch 99999 min_spaceġ3Mpps: much slower than 11-STABLE (42Mpps)Īnd a last test, this one showing better performance in CURRENT vs 11-STABLE :) Ixl0: Using MSI-X interrupts with 7 vectors Ixl0: Using 2048 TX descriptors and 2048 RX descriptors Ixl0: PCI Express Bus: Speed 8.0GT/s Width x8 Ixl0: Allocating 8 queues for PF LAN VSI 6 queues active Ixl0: Using MSIX interrupts with 7 vectors Ixl0: using 2048 tx descriptors and 2048 rx descriptors Testing NIC Intel IX710, 6 queues configured Ix1: PCI Express Bus: Speed 5.0GT/s Width x8 Ix1: Using MSI-X interrupts with 2 vectors Ix1: port 0xece0-0xecff mem 0xdb600000-0xdb6fffff,0xdb7fc000-0xdb7fffff irq 53 at device 0.1 numa-domain 0 on pci5 Testing NIC Intel X520, 1 queue configured Here are my tests and the results using differents OS version/NIC & number of queues I'm testing netmap tx performance between 11-STABLE and CURRENT (same results as 12-STABLE) with 2 NICs:

Netmap tx timer w/queue intr enable + honor IPCP_TX_INTR in ixl_txd_encapĬleaned up netmap tx timer patch (no sysctl) Netmap tx timer + honor IPI_TX_INTR in ixl txd_encap

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed